Biometric security, whether fingerprint, facial or iris recognition, is fast emerging as the preferred way to safeguard the data of companies and individuals from threat actors. However, while biometric identification may be less prone to theft and spoofing than passwords, it’s still vulnerable to hacking.

Dubai has always been at the cutting edge, so it is no surprise that the emirate’s authorities have embraced biometric technology with enthusiasm.

As was widely reported at the time, 120 latest-generation smart gates were installed at Terminal 3 of the city’s airport late last year. These dramatically cut the time passengers spend going through passport control by using facial recognition technology, plus a form of ID.

This summer things go a step further when the airport introduces a “smart tunnel” with dozens of cameras to analyse the face or the eyes. Travellers using it will not have to pass through a passport gate, smart or otherwise, on the way to their flight.

The use of biometric authentication at Dubai International Airport mirrors the situation at airports across the world – and in myriad other contexts.

From unlocking computers to gaining entry to offices, its use is becoming routine. We can secure access to bank accounts thanks to voice recognition; to tablets using fingerprints; and to smartphones through facial biometrics, to give just a few examples. There is also retinal scanning, based on the pattern of blood vessels in the eye’s inner coat, and iris recognition, which uses mathematical methods to identify the coloured part of the eye.

In future, we might be able to walk into our favourite store in a UAE shopping mall and make purchases without a bank card; instead, the unique shape of our ears, photographed by an instore camera, will identify us.

A recent survey by Spiceworks, a Texas-based IT professional network, found that biometric authentication is now used by almost two-thirds of organisations and, within the next two years, most of the remainder will get on board. So by the end of 2020, nearly nine out of 10 organisations may rely on biometrics.

Yet for all its growing ubiquity, biometric authentication is often not trusted to do the job alone: just one in 10 of the IT professionals Spiceworks surveyed thought that the technology was secure enough to be used without an additional form of security.

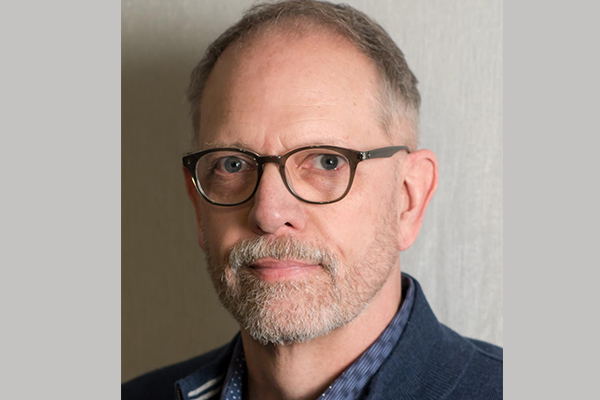

“I don’t see it replacing passwords for the near future, but if the improvements that we see continue, you can see in future why it would wipe away passwords. But it will be used more … along with passwords and pin codes,” said Kevin Curran, professor of cyber security at Ulster University in the United Kingdom.

A major concern associated with biometrics is the risk of the data being stolen. An advantage of passwords and pin numbers is that they can be easily changed, but the loss of biometric data is potentially much more concerning. Our fingerprints or voices may be unique, if this data is lost, the consequences are far reaching.

“A password is something people know and it’s easy to change it and to update it. But with fingerprints or face ID, if they’re stolen, they’re gone,” said Curran.

There are multiple risks that can result in biometric data being hacked, according to Michael Fauscette, the chief research officer at G2 Crowd, a Chicago-headquartered platform for peer-to-peer reviews of business solutions.

“The security risks associated with biometric data are very similar to any other personal data: once the data is stored somewhere, it can be hacked,” he said.

“Moving the data from the sensor to the repository is also a risk point, and must include data encryption to prevent hijacking.”

There is also a “large risk” linked to data held in storage, but Fauscette said there are other concerns too, with the process of setting up the system, sometimes called enrolment, a potential weak point.

“If the enrolment process doesn’t include positive identification, then the whole system is at risk from the start. The wrong person’s biometric data could be used and [become associated with] a different person,” he said.

“Or, if the enrolment process includes a comparison of biometric data to some central repository as a way to validate identity, there is risk to the data in transit if it is not encrypted.”

When it comes to the day-to-day use of biometric authentication, a key vulnerability is that hackers can use image-generation software and machine learning to overcome defences.

“Attackers today know what to expect if an authentication challenge only asks them to smile or blink, so they can produce a blinking model or smiling face in real time relatively easily,” he said.

Indeed Uzun said that many third-party cloud-based services providing audio and facial authentication to other large organisations involve methods prone to primitive spoofing attacks.

“A common mistake of current systems is that they still use pretty plain challenges, such as just smiling and blinking, to prevent spoofing attacks. Any system that uses a fixed challenge like that is not secure. The challenge should always be randomised. We feel strongly about this,” he said.

Uzun, who previously was a research assistant at New York University Abu Dhabi, has worked with his co-researchers at Georgia Tech to introduce a method that involves such a randomized challenge.

Presented at a recent conference in San Diego, their solution involves Captcha (Completely Automated Public Turing Test) methods, which ensure that the user is a human, not a machine. A simple Captcha method might involve asking the user to write a word or a number that was written in distorted letters.

“Captcha has been used for years to prevent bot attacks, such as fake account creation, to many web services of major companies like Facebook, Amazon and Google. On the other hand, adversaries have been trying to build sophisticated Captcha-breaking mechanisms based on machine learning and deep learning,” said Uzun.

Uzun and his colleagues, including Professor Wenke Lee and Simon Pak Ho Chung, have developed a real-time Captcha method (rtCaptcha) that requires the user to look into a smartphone camera and answer a randomly chosen question within a short time window.

“rtCaptcha strengthens the computational challenge by forcing adversaries to figure out what the authentication tasks are and quickly combine them – synchronising the voice, face and personal knowledge of an individual in a way that appears lifelike,” said Uzun.

“We force attackers to show, share and say what only an individual could know – and do that in less than two seconds.”

While tests showed that humans take a maximum of about a second to respond, it takes machines at least six seconds to understand the question and then produce faked video and audio.

“Our goal in rtCaptcha was to combine as many modern authentication methods as possible in a way that could always be randomised for the strongest security while preserving the usability of the authentication system,” added Uzun.

While rtCaptcha is a promising method to help make biometric authentication more secure, Uzun is under no illusions that those working to improve the security of biometric authentication will ever be able to rest on their laurels. It is a cat-and-mouse game.

“As long as there is reward behind the risk, hackers will continue to attempt to break any new security method. We have to make breaking security financially disadvantageous, extraordinarily time consuming and also computationally burdensome,” said Uzun.